Build Intelligent Applications with LlamaIndex

Oodles builds production-grade applications using LlamaIndex to connect LLMs with private and enterprise data. We design retrieval pipelines, indexing strategies, and query engines that power reliable, scalable RAG systems across documents, databases, and APIs.

What is LlamaIndex?

LlamaIndex is a data framework purpose-built for LLM-powered applications. It enables structured ingestion, indexing, and retrieval of private or domain-specific data, forming the foundation for production-ready Retrieval-Augmented Generation (RAG) systems.

Oodles leverages LlamaIndex components such as data connectors, indexes, query engines, and response synthesizers to deliver reliable knowledge-driven applications.

Why Choose Our LlamaIndex Development?

Oodles delivers end-to-end LlamaIndex solutions optimized for accuracy, scalability, and enterprise deployment.

- • Custom LlamaIndex data connectors for files, databases, and APIs

- • Advanced RAG pipelines using hybrid retrieval and reranking

- • Vector store integrations through LlamaIndex abstractions

- • Multi-modal indexing for text and structured data

- • Production deployment with observability and evaluation pipelines

Document Indexing

Ingest and index documents using LlamaIndex loaders for files, databases, and structured data sources.

Query Engines

Build LlamaIndex query engines that interpret natural language questions and retrieve context-aware responses.

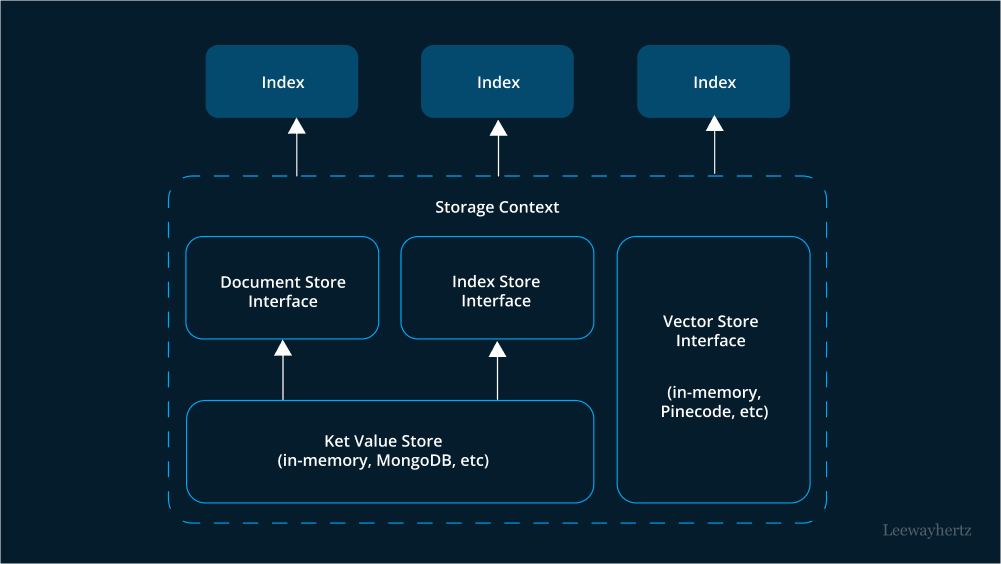

Vector Storage

Use LlamaIndex vector store integrations to connect with external vector databases through a unified interface.

LLM Agnostic

Connect LlamaIndex pipelines to different LLM providers without changing indexing or retrieval logic.

How LlamaIndex Development Works

A structured delivery approach followed by Oodles to implement production-ready LlamaIndex RAG applications.

1

Data Source Analysis & Planning: Identify data sources, document formats, and retrieval requirements for LlamaIndex pipelines.

2

Data Ingestion & Indexing: Use LlamaIndex loaders and parsers to ingest data and create optimized index structures.

3

Query Engine & RAG Pipeline: Build LlamaIndex query engines with retrieval, reranking, and response synthesis components.

4

LLM Integration & API: Integrate LlamaIndex with selected LLMs and expose query pipelines through secure APIs.

5

Deployment & Optimization: Deploy LlamaIndex applications with logging, tracing, evaluation, and continuous retrieval optimization.

Key LlamaIndex Features & Capabilities

Data Connectors

Built-in and custom LlamaIndex connectors for files, databases, and external data sources.

Vector Index Types

LlamaIndex index abstractions such as VectorStoreIndex, SummaryIndex, and TreeIndex for different retrieval patterns..

Query Engines

Modular LlamaIndex query engines supporting sub-queries, structured queries, and multi-document retrieval.

RAG Pipelines

Retrieval pipelines built with LlamaIndex combining hybrid search, reranking, and response synthesis.

Agents & Tools

LlamaIndex agent frameworks with tool calling, memory management, and multi-step reasoning.

Observability

Tracing, logging, and evaluation utilities provided by LlamaIndex for debugging and optimization.

FAQs (Frequently Asked Questions)

LlamaIndex is a data framework for LLM apps. Connects your data to LLMs via indexing, retrieval, and query engines. Build RAG, chatbots, and knowledge systems with less code.

Docs, PDFs, databases, APIs, and more. Built-in connectors for Google Drive, Notion, Slack. Custom loaders for proprietary sources. We integrate your data into unified indexes.

Both enable RAG and LLM apps. LlamaIndex focuses on data ingestion, indexing, and retrieval. LangChain adds broader orchestration. Use LlamaIndex when data-centric RAG is the priority.

Yes. LlamaIndex supports Pinecone, Weaviate, Milvus, Chroma, Qdrant, and more. We connect your existing vector DB or recommend one. Unified API for indexing and querying.

Chunking, embedding, and indexing at scale. Streaming ingestion and incremental updates. We tune chunk size, overlap, and retrieval for your corpus size and latency needs.

Yes. LlamaIndex has agent and tool abstractions. Combine retrieval with function calling and tool use. We build agents that query your data and orchestrate workflows.

We design RAG pipelines, deploy to your infra, and integrate with your apps. Training, documentation, and ongoing support. Help scaling and optimizing for production.