Build Advanced AI Systems with Deep Learning

Oodles designs and delivers production-ready deep learning solutions using Python, TensorFlow, PyTorch, Keras, Hugging Face Transformers, CUDA, and distributed GPU infrastructure. We build intelligent systems powered by convolutional networks, recurrent architectures, and transformer models that enable computers to see, language models to reason, and AI systems to learn complex patterns at scale. From large-scale model training to optimized inference pipelines, our deep learning engineering focuses on accuracy, scalability, and real-world deployment.

What is Deep Learning?

Deep Learning is a specialized branch of machine learning that uses multi-layer artificial neural networks to automatically learn hierarchical representations from large volumes of data. These models are implemented using frameworks such as TensorFlow, PyTorch, and Keras and trained efficiently using GPU-accelerated computing with CUDA and distributed training frameworks.

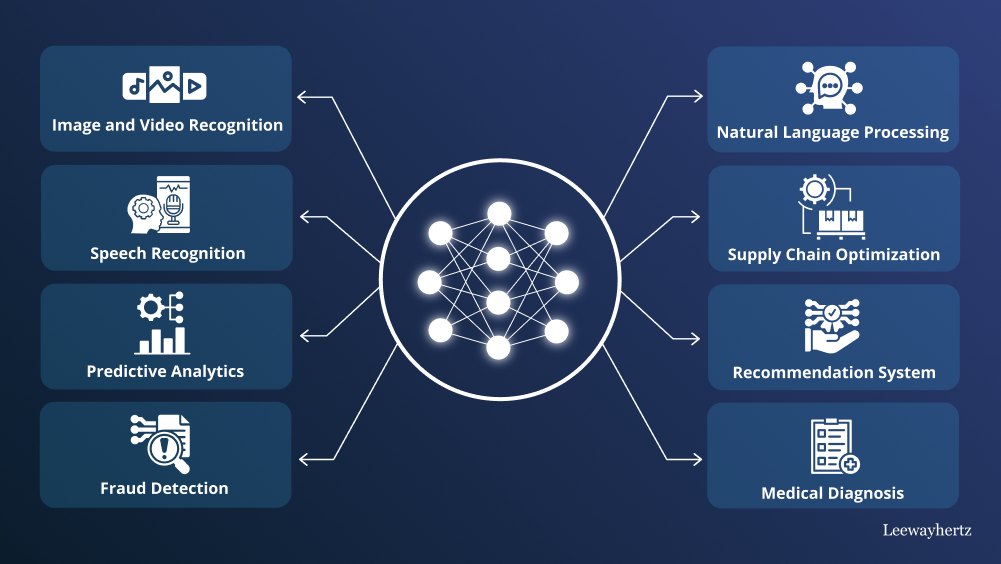

Deep learning architectures such as CNNs, RNNs, LSTMs, Transformers, GANs, and Diffusion Models power modern AI systems across computer vision, natural language processing, speech recognition, and generative AI.

Deep Learning Development Pipeline

Data Collection

Structured and unstructured datasets ingested from APIs, databases, cloud storage, and large-scale training corpora.

Preprocessing

Data cleaning, normalization, augmentation, and feature preparation using NumPy, Pandas, OpenCV, and data pipelines optimized for deep learning workloads.

Deep Model Training

Training CNNs, RNNs, Transformers, GANs, and Autoencoders using TensorFlow, PyTorch, Keras, accelerated by GPU/TPU infrastructure.

Evaluation

Model evaluation using accuracy, precision, recall, F1-score, ROC-AUC, and validation techniques commonly used in deep learning training workflows..

Deployment & MLOps

Deployment of trained deep learning models for inference with monitoring, retraining, and lifecycle management. with continuous monitoring, retraining, and MLOps workflows.

Core Deep Learning Architectures

Convolutional Neural Networks (CNNs)

Image recognition, computer vision, and object detection using TensorFlow Vision, PyTorch Vision, OpenCV, and CNN-based architectures.

Recurrent Neural Networks (RNNs/LSTMs)

Sequence modeling, time-series analysis, and NLP using LSTM, GRU, and recurrent models implemented in TensorFlow and PyTorch.

Transformer Architectures

Large language models, attention mechanisms, and generative architectures built using transformer-based neural networks and vision transformers.

Industry-Specific Deep Learning Applications

Computer Vision & Image Recognition

Advanced image classification, object detection, and medical imaging using CNNs, vision transformers, OpenCV, TensorFlow, and PyTorch.

Natural Language Processing

Language understanding, translation, chatbots, and text generation using Transformers, Hugging Face libraries, and deep NLP models.

Speech Recognition & Synthesis

Voice assistants, speech-to-text, and text-to-speech using deep neural networks, spectrogram models, and GPU-accelerated training.

Generative AI & Content Creation

Image and text generation using GANs, VAEs, diffusion models, and large language models.

FAQs (Frequently Asked Questions)

Deep learning uses neural networks with many layers to learn hierarchical representations from data. Unlike traditional ML (linear models, trees), it excels at unstructured data—images, text, speech—and often achieves state-of-the-art accuracy with enough data.

We offer CNN development for vision, RNN/Transformer for sequences, autoencoders for anomaly detection, and GANs for synthesis. We cover computer vision, NLP, recommender systems, and custom architectures.

Deep learning often needs 10K+ labeled samples; transfer learning reduces this. Compute varies: a single GPU can train many models; large models need multi-GPU or cloud. We optimize for your budget and timeline.

Yes. We use attention visualization, Grad-CAM, SHAP, and LIME. We design inherently interpretable architectures when possible. We provide reports and dashboards for model transparency.

We optimize with quantization, pruning, and TensorRT/ONNX. We deploy on GPU instances, edge devices, or serverless. We benchmark latency and throughput. We use batching and caching for efficiency.

Yes. We provide use-case analysis, feasibility studies, and architecture recommendations. We help prioritize projects, estimate resources, and plan rollout. We also offer training for your team.

We specialize in healthcare (medical imaging), finance (fraud, risk), retail (recommendations, demand), manufacturing (defect detection), and media (content moderation, synthesis). We adapt to your domain and compliance needs.