Build Accurate Classification & Regression Models with KNN Algorithm

Oodles designs and deploys data-driven machine learning solutions using the K-Nearest Neighbours (KNN) algorithm to solve real-world classification, regression, and similarity-search problems. Our KNN models are built using Python, NumPy, Pandas, and Scikit-learn, with robust preprocessing, feature scaling, distance-metric optimization, and production-ready deployment pipelines that integrate seamlessly into business workflows.

What is KNN (K-Nearest Neighbours)?

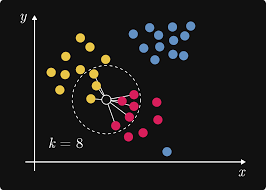

K-Nearest Neighbours (KNN) is a non-parametric, supervised machine learning algorithm used for classification and regression. Implemented commonly using Scikit-learn, KNN predicts outcomes by identifying the K closest data points in the feature space using distance metrics such as Euclidean, Manhattan, or Minkowski distance.

KNN models rely heavily on effective feature engineering and scaling using NumPy, Pandas, StandardScaler, and MinMaxScaler, making them highly interpretable and well-suited for small to medium-sized datasets.

KNN Algorithm Development Pipeline

Data Collection

Structured data collected from CSV files, databases, APIs, and business systems for supervised learning tasks.

Preprocessing

Data cleaning, normalization, and feature scaling using Pandas, NumPy, and Scikit-learn preprocessing tools (StandardScaler, MinMaxScaler).

KNN Model Training

Train KNN models using Scikit-learn’s KNeighborsClassifier and KNeighborsRegressor, selecting optimal K values, distance metrics, and weighting strategies.

Evaluation

Evaluate KNN models using accuracy, precision, recall, F1-score, confusion matrix, and regression metrics such as RMSE and MAE.

Deployment & MLOps

Deploy KNN models as Python-based REST APIs, batch prediction pipelines, or lightweight services using Flask/FastAPI, with monitoring and periodic retraining.

KNN Algorithm Applications & Use Cases

Classification Tasks

Supervised classification using labeled data for tasks such as spam detection, customer churn prediction, and medical diagnosis.

Regression Tasks

Continuous value prediction using KNN regression for price estimation, demand forecasting, and risk scoring.

Similarity Search & Recommendation

Distance-based similarity search for recommendation systems, product matching, and nearest-neighbor retrieval.

Industry-Specific KNN Applications

Recommendation Systems

Build user–item similarity models using KNN for personalized product and content recommendations.

Image Recognition & Classification

Perform feature-based image classification using KNN for digit recognition, pattern matching, and basic vision tasks.

Anomaly Detection

Identify outliers by analyzing distance-based deviations in feature space using KNN.

Medical Diagnosis & Healthcare

Support diagnosis by comparing patient data with nearest historical cases using KNN-based similarity analysis.

Our KNN Algorithm Development Methodology at Oodles

Discovery

Requirements, data audit, feasibility

PoC

Prototype KNN model with sample data and distance metrics

MVP

Production-ready KNN model with optimized K-value and features

Scale

KNN model deployment, monitoring, and performance optimization

FAQs (Frequently Asked Questions)

KNN is a supervised machine learning algorithm used for classification and regression. It works by finding the K closest data points (neighbours) to a new input and making predictions based on their labels or values. The algorithm uses distance metrics (Euclidean, Manhattan, Minkowski) to determine proximity. It's non-parametric, meaning it makes no assumptions about data distribution, and is particularly effective for pattern recognition and recommendation systems.

KNN excels in recommendation systems (product, content, user matching), image recognition and classification, pattern detection, credit scoring, medical diagnosis, customer segmentation, anomaly detection, and handwriting recognition. It's particularly effective when decision boundaries are irregular and when you need an intuitive, interpretable model. Our implementations cover e-commerce recommendations, fraud detection, healthcare diagnostics, and personalization engines.

We optimize KNN through feature scaling (standardization/normalization), dimensionality reduction (PCA, LDA), K-value tuning using cross-validation, distance metric selection, weighted voting schemes, and efficient data structures (KD-trees, Ball trees). We implement approximate nearest neighbor algorithms for large datasets, use GPU acceleration when applicable, and apply feature engineering to improve distance calculations. Our optimization reduces computational complexity while maintaining accuracy.

KNN faces challenges with large datasets (computational cost), high dimensionality (curse of dimensionality), imbalanced classes, and sensitivity to irrelevant features. We address these through approximate nearest neighbor algorithms, dimensionality reduction techniques, weighted distance metrics, outlier detection and removal, efficient indexing structures, and ensemble methods. We also implement sampling strategies and use distance-weighted voting to handle imbalanced data effectively.

We determine optimal K through cross-validation (k-fold, leave-one-out), elbow method analysis, grid search with performance metrics, and domain knowledge consideration. We test odd K values to avoid ties in binary classification, evaluate bias-variance tradeoff (small K = high variance, large K = high bias), and use validation curves to visualize performance. Typical starting point is K = sqrt(n) where n is the dataset size, then we fine-tune based on specific use case requirements.

Yes, with proper optimization. We implement approximate nearest neighbor libraries (FAISS, Annoy, NMSLIB), distributed computing frameworks (Spark MLlib), caching strategies for frequent queries, batch prediction pipelines, and model serving optimizations. We use indexing techniques to reduce search complexity from O(n) to O(log n), implement real-time prediction APIs with sub-second response times, and design scalable architectures using cloud infrastructure for handling millions of predictions daily.

We implement Euclidean distance (continuous features, equal importance), Manhattan distance (high-dimensional data, grid-like paths), Minkowski distance (generalized metric), Cosine similarity (text/document similarity, direction matters), Hamming distance (categorical data), and Mahalanobis distance (correlated features). Selection depends on data type, feature scales, and domain requirements. We provide guidance on metric selection and can implement custom distance functions for specialized applications.