Bring Your AI to Life with Lifelike Speech Synthesis

Build natural, expressive text-to-speech (TTS) systems using Python-based neural speech synthesis models optimized with C/C++ backends for low-latency inference. Oodles AI delivers real-time, multilingual, and customizable speech synthesis solutions for voice assistants, chatbots, audiobooks, accessibility platforms, and enterprise AI applications.

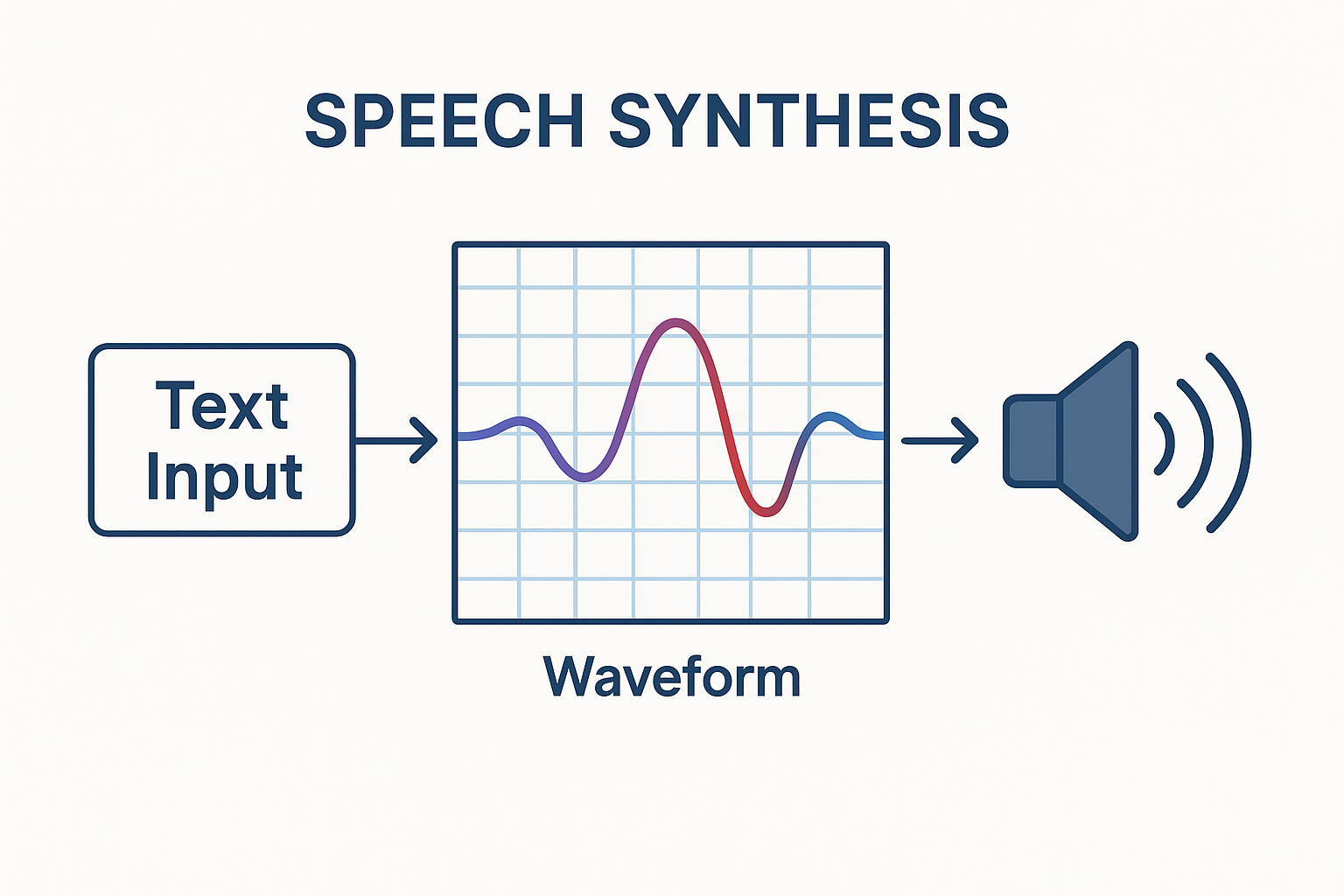

What is Speech Synthesis?

Speech synthesis (Text-to-Speech or TTS) is the process of converting written text into natural-sounding speech using deep learning models. Modern TTS systems are primarily built using Python for model development, with C and C++ used for high-performance audio processing and inference optimization. Neural architectures such as Tacotron 2, WaveNet, FastSpeech, and VITS enable human-like voices with realistic prosody, emotion, and multilingual support—making speech synthesis ideal for production-grade AI systems.

What We Deliver with Speech Synthesis

Neural TTS Models

Deploy Tacotron, WaveNet, VITS, or custom models for ultra-realistic speech.

Voice Cloning

Create custom voices from just minutes of target speaker audio.

Multilingual & Accents

Support 100+ languages with regional dialects and code-switching.

Real-Time Streaming

Low-latency audio chunks for interactive voice agents and live narration.

Expressive Prosody

Control emotion, pitch, pace, and emphasis via SSML or API parameters.

Enterprise Integration

On-prem, cloud, or hybrid deployment with SOC 2, GDPR compliance.

Our Methodology

A structured, iterative approach to deliver production-grade speech synthesis solutions.

Discover

Assess voice requirements, target languages, latency, and use case.

Design

Select model architecture, voice style, and prosody controls.

Build

Train/fine-tune models, integrate SSML, and optimize inference.

Deploy & Scale

Launch with auto-scaling, monitoring, and A/B voice testing.

FAQs (Frequently Asked Questions)

Speech synthesis automates IVR systems, AI receptionists, and voice assistants to deliver instant, natural responses, reducing call center workload and improving customer satisfaction.

Yes, AI speech synthesis enables custom voice modeling and branded voice assistants, ensuring consistent tone, pronunciation, and messaging across all customer touchpoints.

Speech synthesis solutions are widely used in eLearning, audiobooks, and digital media to generate scalable, high-quality voice narration with multilingual support.

Neural text-to-speech uses deep learning models to generate lifelike speech with natural pauses, emotional tone, and human-like inflection, unlike robotic traditional TTS engines.

Yes, speech synthesis integrates seamlessly with chatbots, voice bots, and AI assistants to deliver end-to-end conversational AI solutions with real-time voice responses.

Industries including healthcare, fintech, telecom, eCommerce, automotive, and SaaS use speech synthesis for voice automation, accessibility solutions, and AI-driven communication.

Enterprise speech synthesis solutions are built on cloud-native architecture, enabling real-time voice generation at scale with high availability and low latency performance.