Build Production-Ready Deep Learning Models with Keras

Oodles builds scalable, production-grade deep learning solutions using Keras with TensorFlow to accelerate model development and deployment. Our engineers leverage Python, Keras APIs, TensorFlow backends, GPU acceleration, and distributed training pipelines to deliver high-performance models for computer vision, natural language processing, speech recognition, and time-series forecasting. By combining Keras’ intuitive high-level abstractions with TensorFlow’s low-level control, Oodles enables faster experimentation, reproducible training, and seamless transition from research to enterprise-scale production environments.

What is Keras?

Keras is a high-level deep learning framework written in Python and natively integrated with TensorFlow. It provides modular, reusable components for defining neural networks, training workflows, and deployment pipelines, enabling developers to build reliable deep learning systems with minimal boilerplate code.

Keras supports both the Sequential and Functional APIs, allowing flexible model design while maintaining compatibility with TensorFlow’s execution engine, distributed training, and hardware acceleration.

Why Choose Oodles for Keras Development?

- ✓ Rapid prototyping using Keras high-level Python API

- ✓ Production-ready models powered by TensorFlow backend

- ✓ Support for CNNs, RNNs, LSTMs, and Transformer-based architectures

- ✓ Seamless deployment to cloud, mobile, web, and edge platforms

- ✓ Expert optimization using Keras callbacks, optimizers, and tuning tools

Intuitive

High-level API

Flexible

Architecture design

Scalable

TensorFlow backend

Production-Ready

Deployment ready

How Our Keras Development Process Works

A streamlined workflow from neural network design to production deployment, leveraging Keras' simplicity and TensorFlow's power.

1

Requirements & Architecture Design: Analyze project requirements and design CNN, RNN, LSTM, or Transformer architectures using Keras Sequential and Functional APIs.

2

Model Implementation: Implement models using Keras layers (Dense, Conv2D, LSTM, Attention), configure activation functions, regularization, and compile models with TensorFlow optimizers such as Adam, RMSprop, and SGD.

3

Data Preparation & Training: Prepare datasets and train models using Keras fit(), data generators, and callbacks including EarlyStopping, ModelCheckpoint, and ReduceLROnPlateau.

4

Model Evaluation & Optimization: Evaluate models using validation and test datasets, apply hyperparameter tuning, transfer learning, and optimize inference performance using TensorFlow tools.

5

Deployment & Integration: Deploy Keras models using TensorFlow Serving, TensorFlow Lite (mobile/edge), and TensorFlow.js (web), and integrate with production systems with monitoring and retraining pipelines.

Key Features & Capabilities

Neural Network Architectures

Build CNNs, RNNs, LSTMs, and Transformer-based models using Keras layers, models, and TensorFlow operations.

Transfer Learning & Pre-trained Models

Fine-tune pre-trained models such as ResNet, MobileNet, EfficientNet, and BERT-based architectures using Keras, reducing training time and improving accuracy.

Model Training & Optimization

Train models with custom loss functions, Keras metrics, optimizers, batch normalization, dropout, and data augmentation pipelines.

Deployment & Production

Deploy Keras models to TensorFlow Serving, TensorFlow Lite, TensorFlow.js, and cloud platforms using optimized inference pipelines.

Custom Layers & Callbacks

Implement custom Keras layers, loss functions, and callbacks, enabling advanced training strategies and domain-specific deep learning solutions.

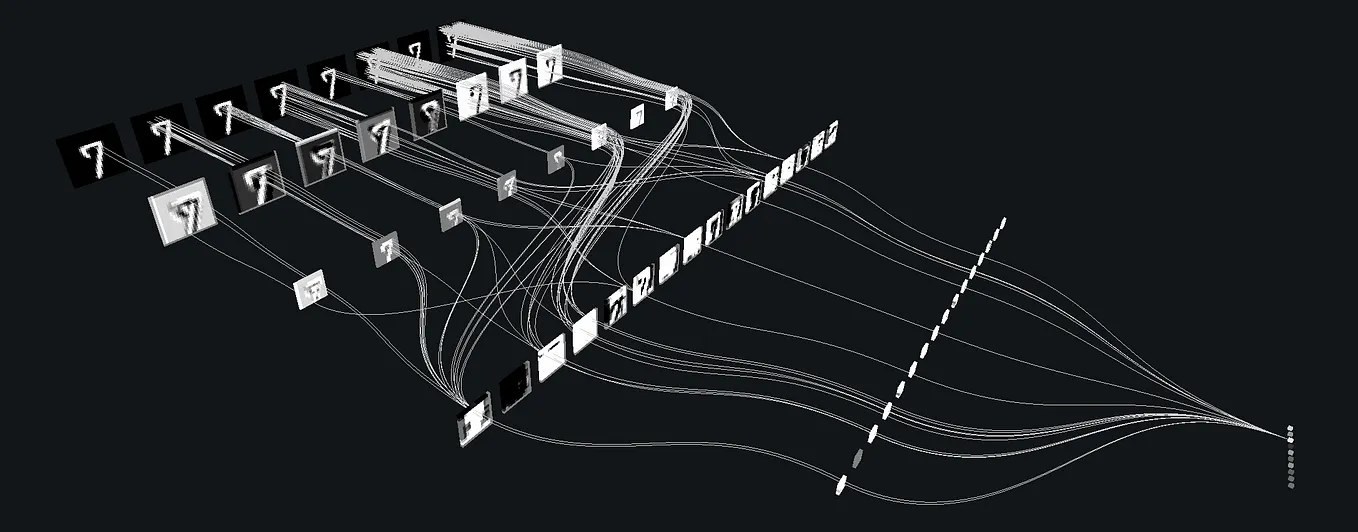

Model Interpretability

Apply Keras-compatible explainability techniques such as Grad-CAM, attention visualization, and activation mapping to understand and validate model predictions.

FAQs (Frequently Asked Questions)

Keras is a high-level neural network API, now the default in TensorFlow 2.x. It simplifies model building with a user-friendly interface. Use it for fast prototyping and production models on TensorFlow.

We offer Keras model development, custom layers, training pipelines, and deployment. We use the native tf.keras API. We optimize for TensorFlow Serving, TFLite, and cloud ML.

Yes. We build Keras models and export to SavedModel or TFLite. We optimize for inference speed and size. We deploy via TensorFlow Serving, Vertex AI, or custom APIs.

We implement custom layers, losses, and metrics. We use callbacks for checkpointing, early stopping, and logging. We ensure compatibility with TensorFlow's graph and eager modes.

Yes. We migrate Keras 2.x models to tf.keras with minimal changes. We update imports, fix deprecated APIs, and validate outputs. We ensure parity and performance.

We apply quantization, pruning, and conversion to TFLite. We benchmark latency and accuracy. We use TensorFlow Model Optimization for smaller, faster models.

We provide development, deployment, and maintenance. We handle upgrades when Keras/TensorFlow versions change. We extend models for new features and data.