Enterprise-Grade Ollama Development & Local LLM Integration Services

Oodles delivers secure, cost-efficient Ollama Development Services that enable enterprises to run large language models locally with complete control over data, infrastructure, and inference costs. Our Ollama solutions are built using a robust on-prem and edge-ready tech stack, including Ollama runtime, open-source LLMs (Llama 3, Mistral, Gemma, Phi-3), REST APIs, Python and Node.js backends, GPU-accelerated hardware, and secure internal networking to power private, low-latency AI applications without external cloud dependencies.

What is Ollama?

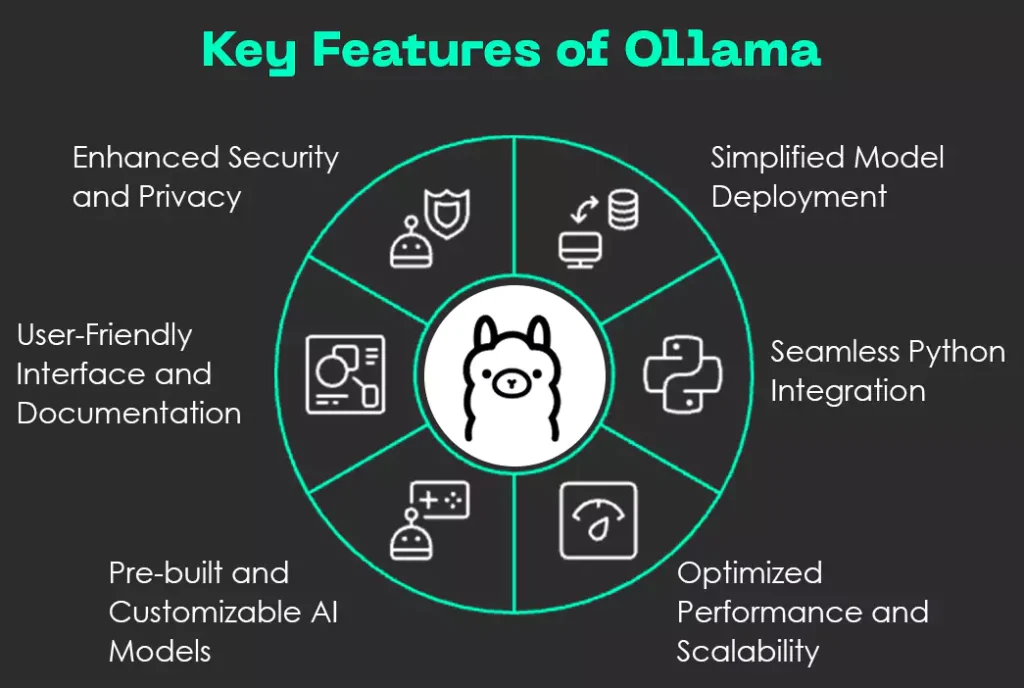

Ollama is an open-source local LLM runtime that allows organizations to run large language models directly on their own machines, servers, or edge devices. It simplifies model execution, management, and inference for modern open-source LLMs such as Llama 3, Mistral, Gemma, and Phi-3.

By using Ollama, enterprises eliminate reliance on external AI APIs, ensuring data never leaves internal systems while achieving low-latency responses, predictable costs, and full compliance with security and regulatory requirements.

Why Choose Oodles for Ollama Development?

Oodles provides end-to-end Ollama integration services, enabling businesses to deploy local LLMs securely across on-prem, private cloud, and edge environments with enterprise-grade reliability and performance.

- • Local LLM execution with complete data ownership

- • Integration with Ollama REST APIs for internal applications

- • Support for GPU, CPU, and Apple Silicon acceleration

- • Zero per-token or subscription inference costs

- • Custom fine-tuning and model optimization workflows

Local LLM Hub

Access and manage a wide range of open-source models directly on your local infrastructure.

Data Privacy

Ensure sensitive information never leaves your server with local inference and processing.

Cost Scaling

Eliminate per-token costs and API fees by running unlimited inference on your own hardware.

Custom Models

Fine-tune and deploy custom models tailored specifically to your business requirements.

Our Ollama Integration Process

A structured, end-to-end workflow for deploying local LLMs in your enterprise environment.

Infrastructure Audit

Evaluate hardware capabilities and determine the best model size for your resources.

Model Selection

Choose optimal open-source models (Llama 3, Mistral, etc.) based on task complexity.

Local Setup & Config

Install Ollama and configure environment variables for optimal GPU acceleration.

API development

Build high-performance wrappers around Ollama's local REST API for your apps.

Scaling & Security

Implement load balancing and internal security protocols for enterprise use.

FAQs (Frequently Asked Questions)

Ollama runs open-source LLMs on your machine. One command to download and run models. No cloud. Data stays local. Supports Llama, Mistral, Qwen, and many others.

Yes. Ollama exposes a local API. We help deploy it on servers, Kubernetes, or air-gapped networks. Scale with load balancers and GPU clusters for production.

GPU recommended. 8GB+ VRAM for smaller models. 24GB+ for 7B–70B. CPU-only works for tiny models. We assess your infra and recommend models and hardware.

Yes. Ollama provides OpenAI-compatible API. Drop-in for chat, embeddings, and completion. We connect it to your apps, RAG pipelines, and automation.

All inference runs on your hardware. No data leaves your network. Ideal for regulated industries. We harden deployment, access controls, and audit logs.

Ollama runs pretrained or custom Modelfiles. We fine-tune with your data, export to GGUF, and load into Ollama. Support for LoRA and instruction tuning.

We set up Ollama, integrate with your stack, and optimize for performance. Training, documentation, and ongoing support. Help scaling for multi-node setups.