Understanding LLM Orchestration: Definition and Core Concepts

LLM orchestration at its crux is the systematic coordination of language models, tools, and workflows to perform complex tasks that a single model would struggle to handle on its own.

Rather than relying on one large prompt and a single response, orchestration breaks problems into structured, manageable steps, assigning each step to the most appropriate model or tool.

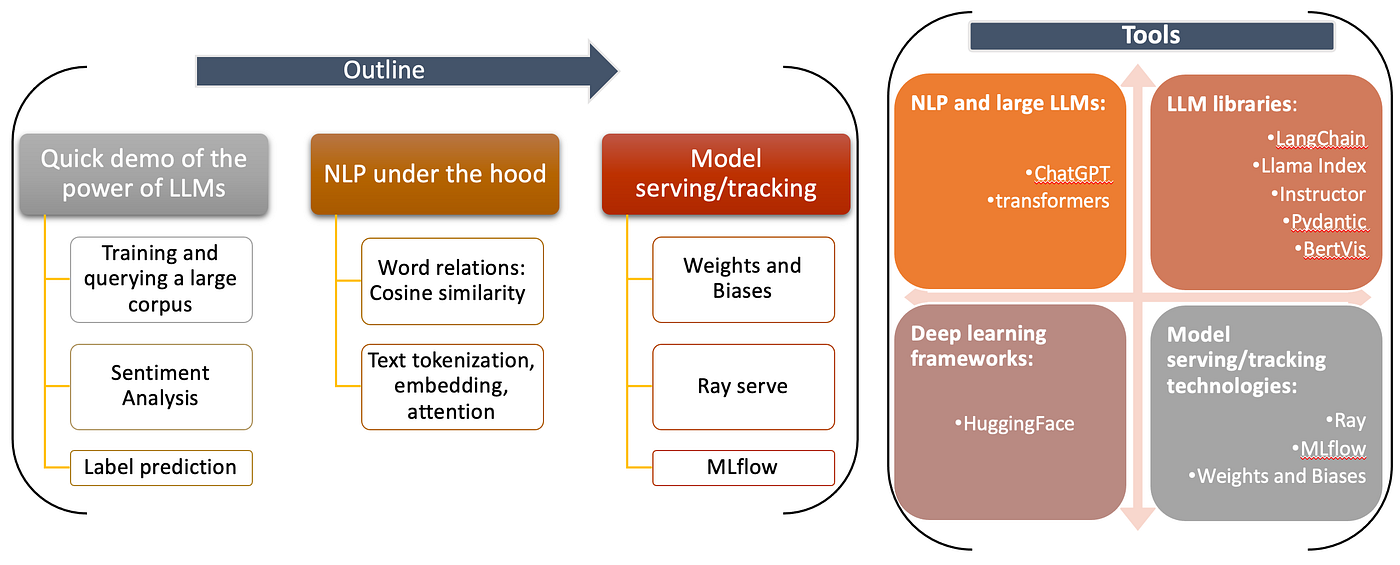

Core Concepts of LLM Orchestration

- Model Selection

Choosing the right model for the right task—large models for reasoning, smaller models for classification or extraction. - Task Allocation

Decomposing complex problems into subtasks and routing them to specialized components. - Result Synthesis

Combining outputs from multiple models into a single, coherent response. - Context & Memory Management

Preserving relevant information across steps while controlling token usage.

Through orchestration, developers move from prompting models to engineering intelligent systems.

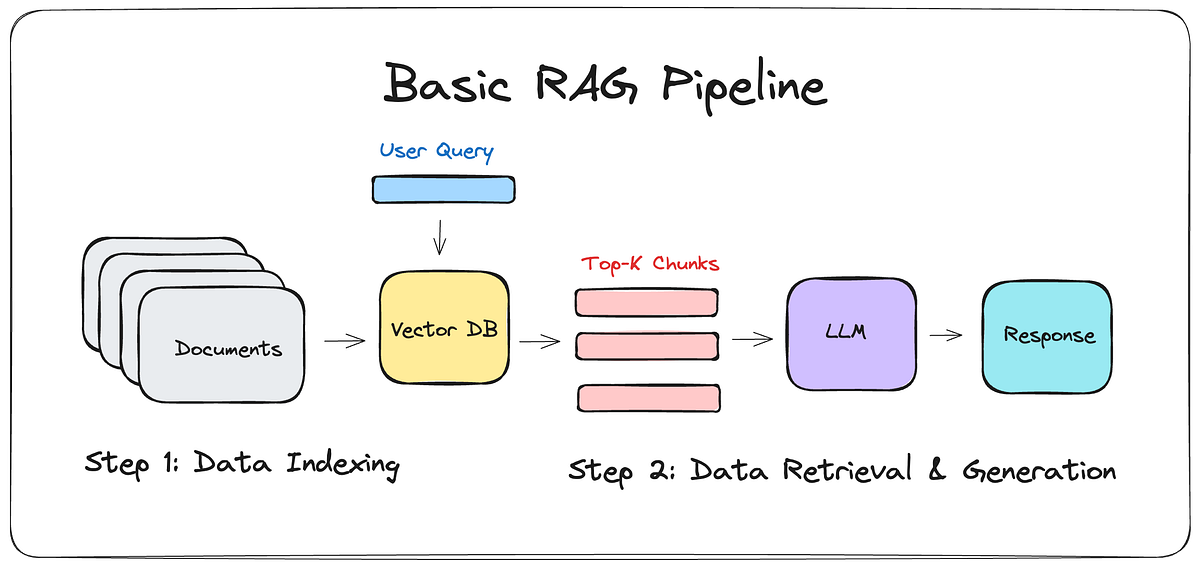

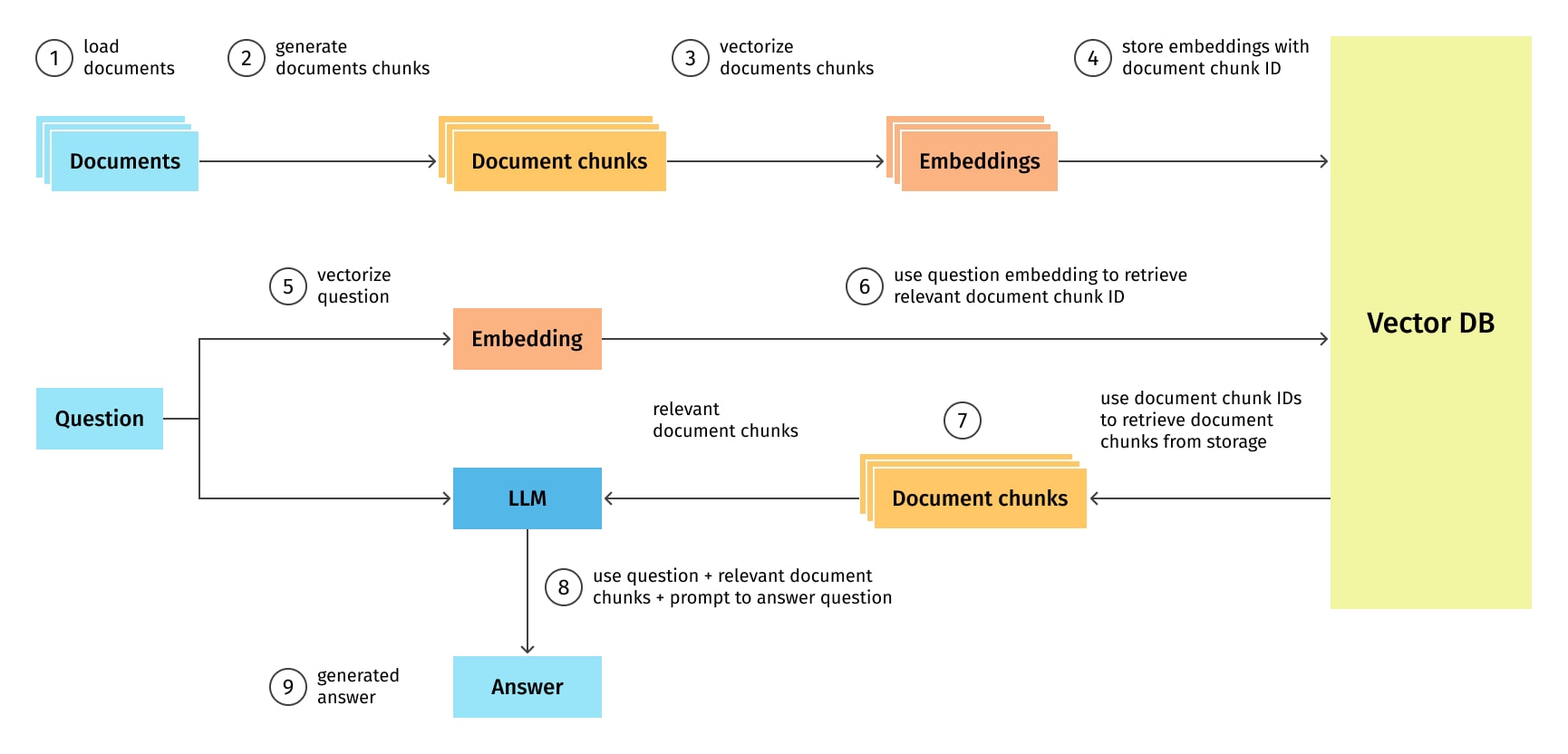

Technical Architecture: How LLM Orchestration Works

At a high level, an LLM-orchestrated system consists of several interconnected layers:

1. Model Management

This layer handles:

- Model routing (which LLM to use and when)

- Versioning and upgrades

- Cost and latency optimization

Different models may be used for reasoning, summarization, extraction, or validation.

2. Task Allocation

Incoming requests are broken into steps such as:

- Data retrieval

- Interpretation

- Reasoning

- Validation

- Output formatting

Each step is assigned to the most suitable model or tool.

3. Tool & API Integration

LLMs interact with:

- Databases

- Search engines

- Internal APIs

- Code execution environments

This allows the system to act first then respond.

4. Result Synthesis & Validation

Outputs from various components are:

- Merged into structured results

- Validated against schemas or rules

- Re-run or corrected if errors are detected

This dramatically improves reliability and trustworthiness.

Exploring Key Use Cases: From Chatbots to Complex Data Analysis

1. Advanced Chatbots & Virtual Assistants

Orchestrated chatbots can:

- Maintain long-term memory

- Access real-time data

- Execute actions (bookings, updates, workflows)

- Handle multi-turn, goal-driven conversations

2. Document & Knowledge Processing

LLMs can be orchestrated to:

- Parse PDFs and emails

- Extract structured data

- Validate information

- Generate summaries or insights

This is especially valuable in legal, finance, healthcare, and enterprise operations.

3. Complex Data Analysis

Multiple models can collaboratively:

- Analyze large datasets

- Interpret trends

- Generate explanations

- Produce executive-ready insights

4. Content Generation at Scale

Different models can handle:

- Research

- Draft creation

- Tone refinement

- Fact-checking

The result is higher-quality, more consistent content.

Integration Strategies: Best Practices for Implementing LLM Orchestration

Successfully implementing LLM orchestration requires thoughtful system design.

1. Define Clear Objectives

Clearly outline:

- What problems the system should solve

- Acceptable error margins

- Performance and cost constraints

This helps in model selection and workflow design.

2. Ensure Model Compatibility

Choose models that:

- Complement each other's strengths

- Share compatible input/output formats

- Align with latency and cost requirements

3. Add Guardrails and Validation

Always include:

- Schema validation

- Confidence checks

- Retry and fallback logic

This prevents unreliable outputs from reaching end users.

4. Monitor, Measure, and Iterate

Track:

- Latency

- Cost per request

- Failure rates

- Output quality

Real-World Implementations: LLM Orchestration in Action

Organizations adopting LLM orchestration report:

- Reduced manual effort through automated workflows

- Higher accuracy due to multi-step validation

- Improved scalability across teams and use cases

- Better compliance with auditable decision flows

The Future of LLM Orchestration

As AI systems mature, orchestration will increasingly involve:

- Multi-agent collaboration

- Hybrid systems combining small and large models

- Event-driven and autonomous workflows

- Deeper integration into backend infrastructure

Conclusion

LLMs are individually powerful, but orchestration turns them into real systems.

By coordinating models, tools, memory, and workflows, LLM orchestration enables AI solutions that are:

- More reliable

- More scalable

- More interpretable

- More impactful

For developers and businesses looking to move beyond experiments and demos, LLM orchestration is no longer optional, it is foundational.